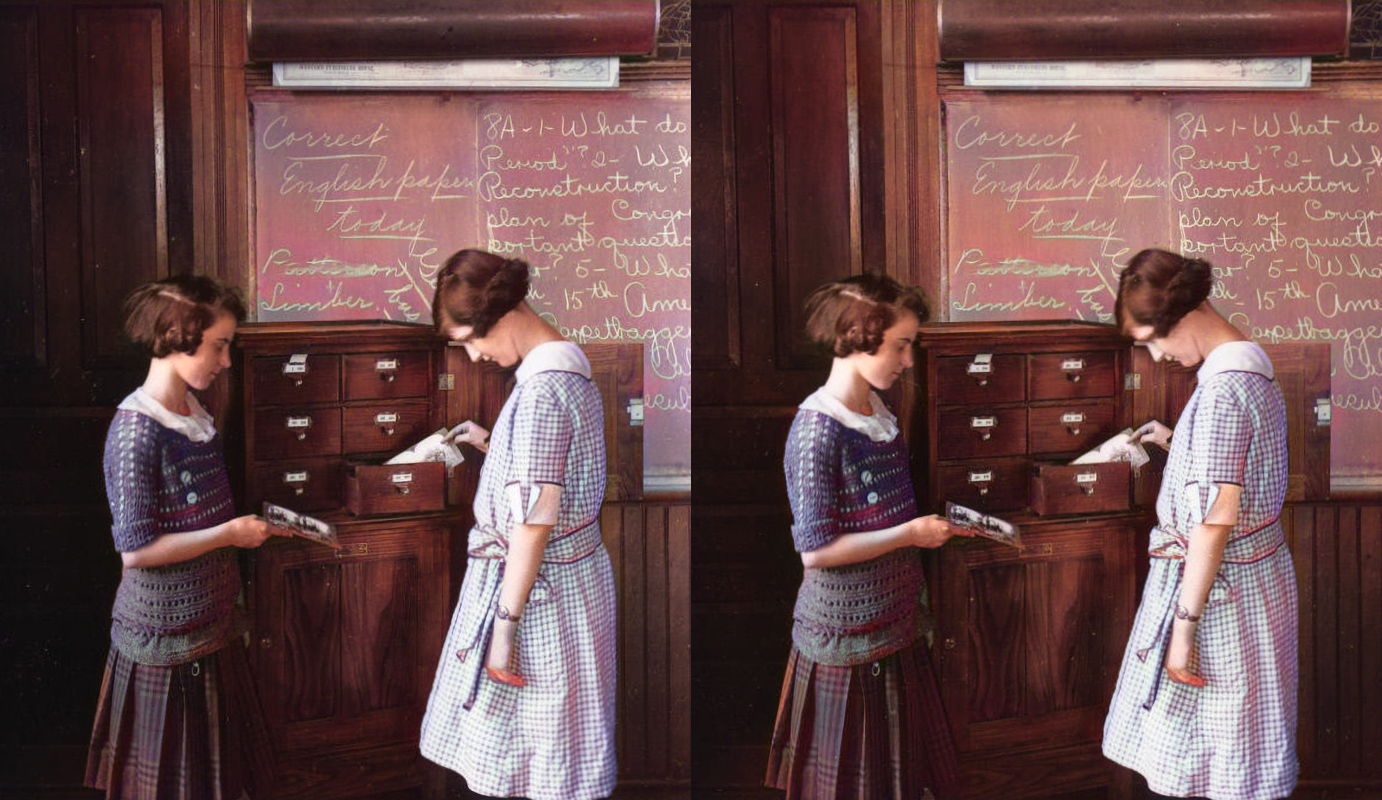

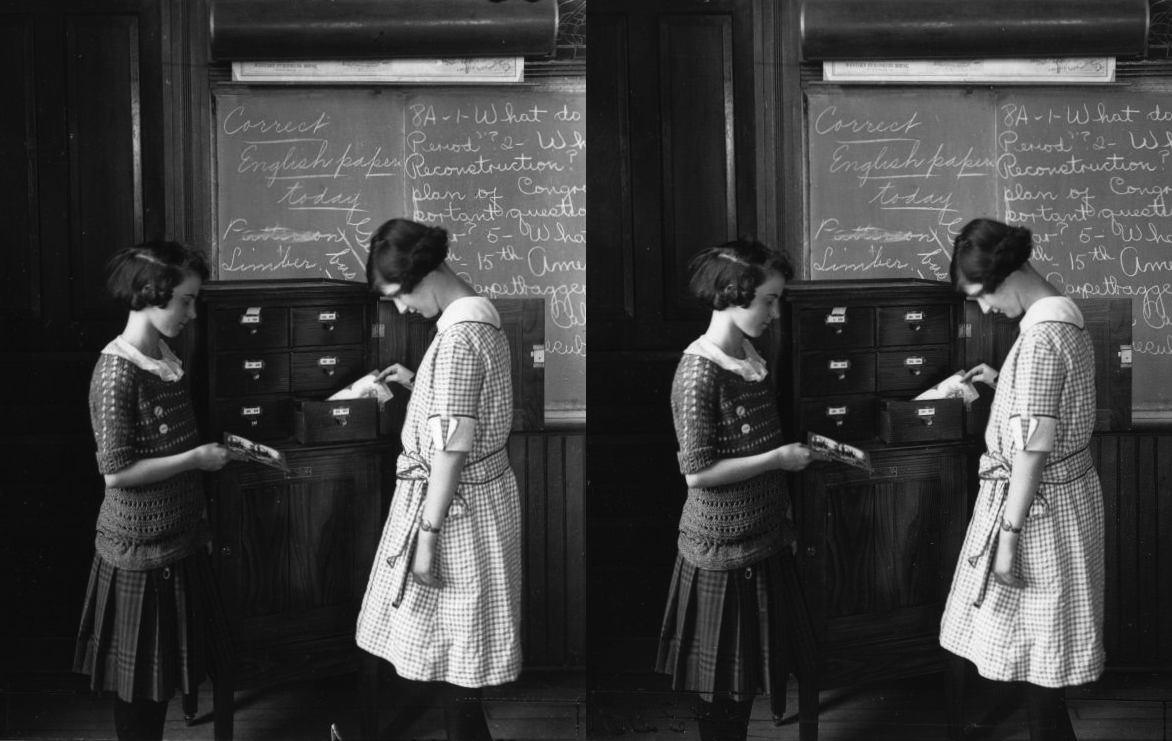

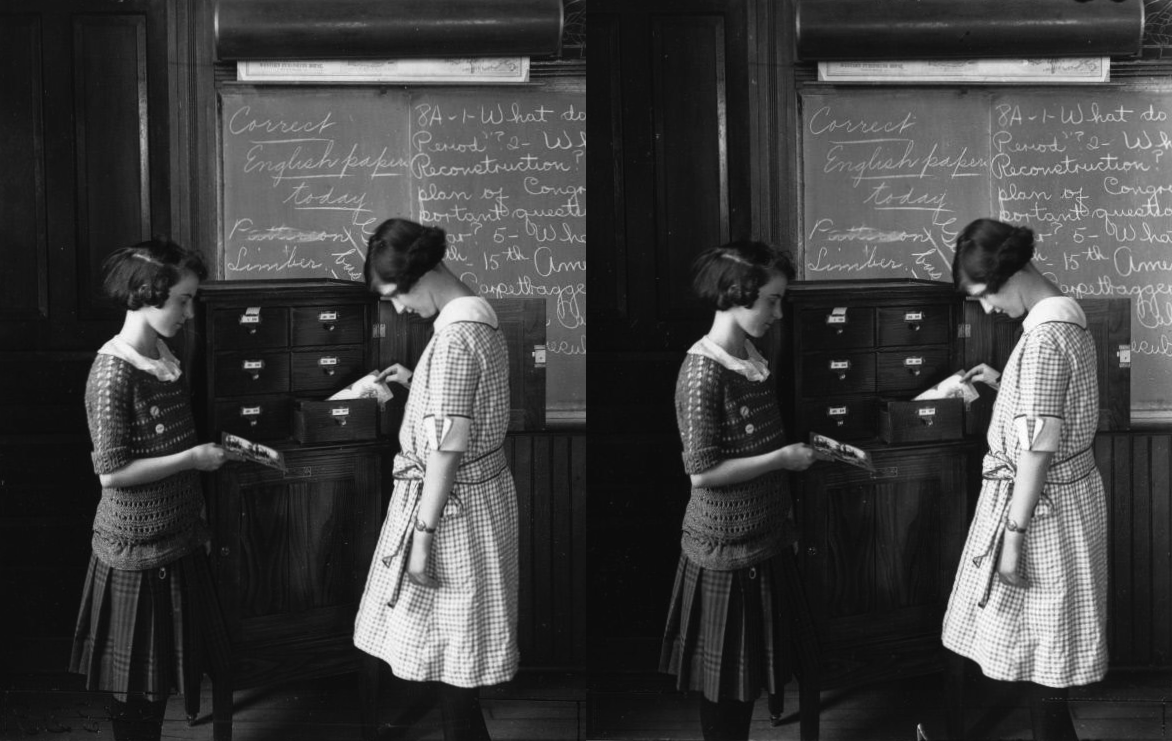

This first image is a classic meta stereocard-of-stereocards, with minor adjustments by me, from the new KeystoneDepth project, “a collection of 37,239 rectified historical stereo image pairs from the Keystone-Mast Collection.” Of special interest to the stereomaker is that these pairs are not only aligned and (sometimes over-)cropped, but also come with depth maps*, enabling all sorts of derived 3D works.

(*The depth maps use the ‘Magma’ colormap scheme, according to my communication with researcher Xuan Luo. Converting these to a grayscale depth map that programs like StereoPhoto Maker require is tricky: 1) just desaturate it, but lose some details and contrast, or 2) do it precisely using an inverse CLUT (color lookup table) and advanced ImageMagick techniques, or MatLab, etc.…)

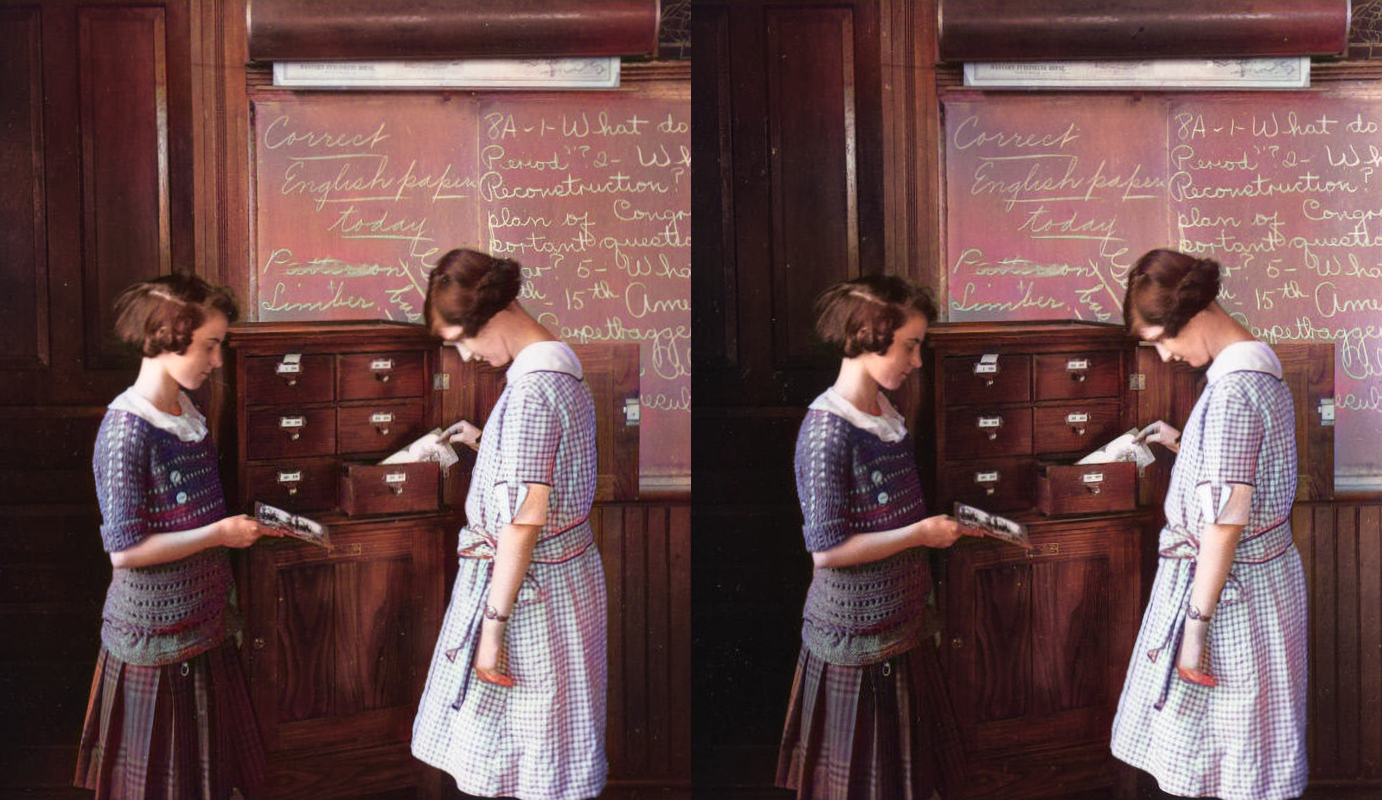

The second image shows the result of running the stereo through the DeepAI Image Colorization AI, which uses machine-learning to guess at colors in black and white images.

The 1st of 3 videos shows the result of using the colorized views, plus the depth maps from KeystoneDepth (converted to compatible grayscale), to generate a rotating stereovideo using StereoPhoto Maker. There are some visible artifacts, but this is already an amazing result, starting from a still black and white image.

The 2nd video was created using an additional AI program: VT-VL-Lab’s 3D-Photo-Inpainting, which fills in occluded surfaces in a 3D mesh model in context-aware fashion, outputting 2D videos which pan around 3D scenes. This video is the result of using 3PI on the colorized left and right views separately, and combining. You will notice stereo discrepancies where the AI filled in surfaces differently in the left and right views.

Finally, the 3rd video shows the use of 3PI again, but only on the right eye’s view, producing a 2D panning video from which I then extracted and created this 3D panning stereovideo. Notice this is much cleaner than the previous video; however, this is the only possible camera motion that produces constant depth. (The technical reason: 3PI’s settings allow specification of x-shift-range, which is how far the video pans in each direction—in other words, the absolute value of the horizontal motion—but not something like x-offset, which would move the entire 3D model horizontally. Therefore if you try producing 2 videos with different x-shift-ranges and combine them side-by-side, you get the curiosity of a stereovideo which oscillates between normal and inverted depth! If you cut such a video into pieces, flip the left and right views for every other one, and reassemble, you can make a video which oscillates between normal depth and flat, but that’s the best you can do. I will share an example of the latter in a future post.)

I think you’ll agree this is a pretty amazing result—in motion and color, all from a black and white stereocard! It is awesome what AI can do.

TAGS: AI > colorized, MiDaS, 3D-Photo-Inpainting; depth maps; experiments; people; stereocards > Keystone-Mast, KeystoneDepth

?Subject=2/1/21">Leave a comment